Network Downtime in Telecom: Causes and Reduction

The Measurement Gap in Telecom Downtime

Network downtime is often treated as a clear, measurable event. In practice, it is not always well defined. A full outage is easy to identify, but many customer-impacting events occur without a complete loss of service. Performance degradation, high latency, or intermittent failures may not be classified as downtime, even though users experience the service as unavailable. This creates a gap between how downtime is recorded and how it is perceived.

Most operators rely on OSS and network monitoring systems to detect and report network downtime. These systems track alarms, link failures, and service interruptions. However, different systems do not always report the same event in the same way. A fault detected in one part of the network may not align with access network data or customer-level impact. Each system provides a partial view based on its function, and data exposed through Northbound Interfaces (NBI) is often aggregated or simplified as it moves toward reporting layers, creating a gap between operational reality and what is presented in executive dashboards.

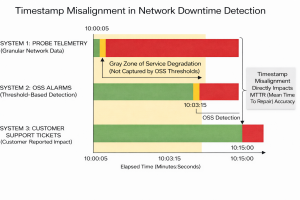

Time alignment is a key constraint. Downtime depends on accurately defining when an incident starts and ends. In many environments, timestamps differ across systems due to variations in synchronization, logging intervals, or time zone handling. This limits the ability to correlate events across OSS, network elements, and customer systems. A single incident may appear as multiple unrelated events.

Operators must also distinguish between planned and unplanned network downtime. Maintenance activities are typically excluded from downtime metrics, but classification is not always consistent. If planned work extends beyond its window or introduces unexpected impact, reporting becomes unclear and comparisons across periods are affected.

The Synchronization Constraint in Telecom Networks

Identifying the cause of downtime introduces further complexity. Hardware failures, fiber cuts, congestion, configuration errors, and configuration drift are common factors. In modern networks, software faults and control plane issues are increasingly relevant. Root cause is rarely visible from a single system and is often inferred from multiple sources that may not fully align.

Combining data across systems is intended to improve clarity. In practice, it can increase complexity. Billing systems, OSS platforms, and network telemetry each describe the network from different perspectives. When these sources are merged, inconsistencies in identifiers, timestamps, and event definitions can fragment the analysis rather than unify it.

Visualization tools such as Tableau improve access to data, but they do not resolve underlying inconsistencies. They present available information in a structured format, but the accuracy of the result depends on the quality and alignment of the source systems.

As networks evolve toward 5G, virtualized, and software-driven architectures, downtime becomes harder to isolate. Failures are no longer always tied to a single physical component. They may involve orchestration layers, software updates, or shared infrastructure constraints. These conditions can produce partial or intermittent service impact that is difficult to detect and classify. These challenges become more relevant in 5G environments, where network slicing and distributed architectures increase the need for more precise monitoring and data alignment.

Monitoring vs Observability in Telecom Downtime

The objective is to reduce downtime, but this can mean different things. It may involve preventing incidents, detecting them earlier, or reducing resolution time. Each approach depends on consistent and reliable data. Without that, improvements may not be reflected in reported metrics.

Downtime metrics are shaped as much by data quality as by network performance. The calculation itself is straightforward. The challenge lies in defining events consistently, aligning data across systems, and interpreting results in a way that reflects actual customer experience.

Traditional monitoring systems indicate when a failure has occurred. They provide visibility into outages and threshold breaches, but they are limited to what is explicitly measured. In contrast, observability focuses on understanding system behavior by analyzing internal states across distributed components. This distinction becomes more relevant as networks move toward virtualized and software-driven architectures, where failures are not always tied to a single event or component.

Network downtime is not only a measure of availability. It is an outcome of how the network is monitored, how events are classified, and how data is interpreted. The focus is not only on reducing downtime, but on improving the ability to understand why it occurs and how similar conditions can be addressed in the future.

Addressing these challenges requires not only better data alignment across systems, but also the ability to translate that data into meaningful operational decisions, as explored in Telecom Analytics: From Data to Operational Decision-Making.

Leave a comment